New Feature: Filter Content by Exact Location

Leverage Image-Embedded Metadata to Derive Richer Data Insights

When smartphone users click the shutter button when taking a picture, they’re often capturing far more than just images.

Embedded image metadata can reveal everything from the manufacturer of the user’s smartphone to their precise geographical location at the moment they took the photo.

Such geolocation metadata can be used to help provide quick snapshots and insights to organizations that want to develop a thorough media monitoring capability and cast as wide a net as possible on capturing brand mentions both shared by text and disseminated through images.

A World Beyond Text

While mining information from textual sources can provide deep insights to help brands understand their public perception, text embedded into images can provide a wealth of additional information that would otherwise be missed.

Optical Character Recognition (OCR) and algorithm-based visual object detection mean that both embedded texts and objects appearing within an image can be instantaneously parsed and added to feeds for further analysis and interpretation.

Significant Information

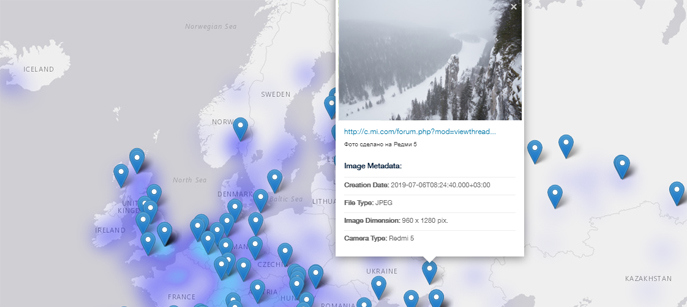

Beyond the geographic location of the photo when taken, the API can determine and retrieve a number of advanced image particulars including the bits compressed per pixel, the image file’s endianness, and the name of the owner.

The addition of the geolocation element to the image API means that users can:

- Retrieve all images in the news, blogs, and discussion posts that were taken in a specific geographical location, such as a neighborhood, city, or country.

- Retrieve all images taken in a certain area that match another element from a Webz.io API, such as a category or label.

Let’s Give it a Try

Want to see how it works in real life?

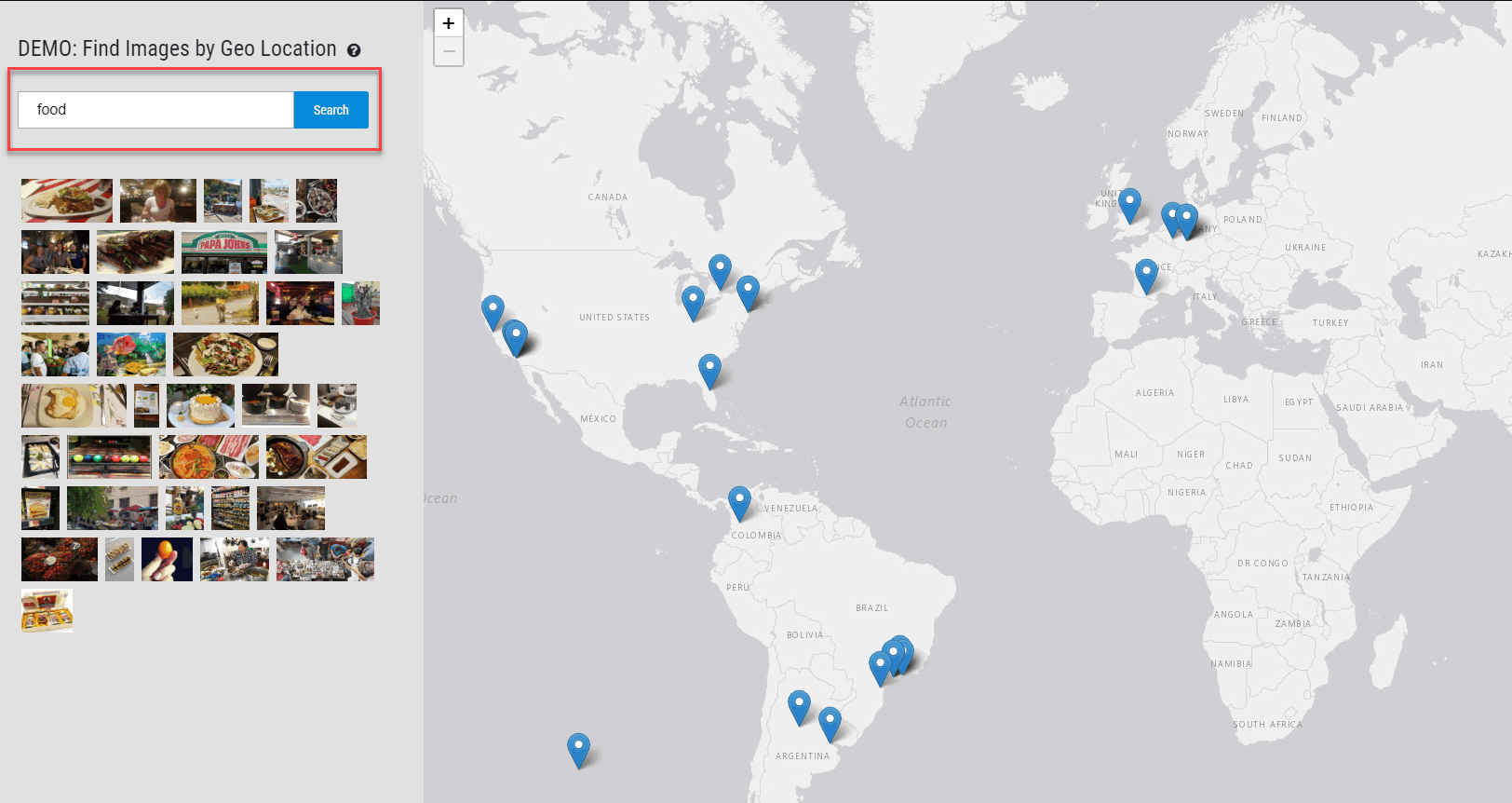

The online demo of the geolocation function lets you play around with location-based searches.

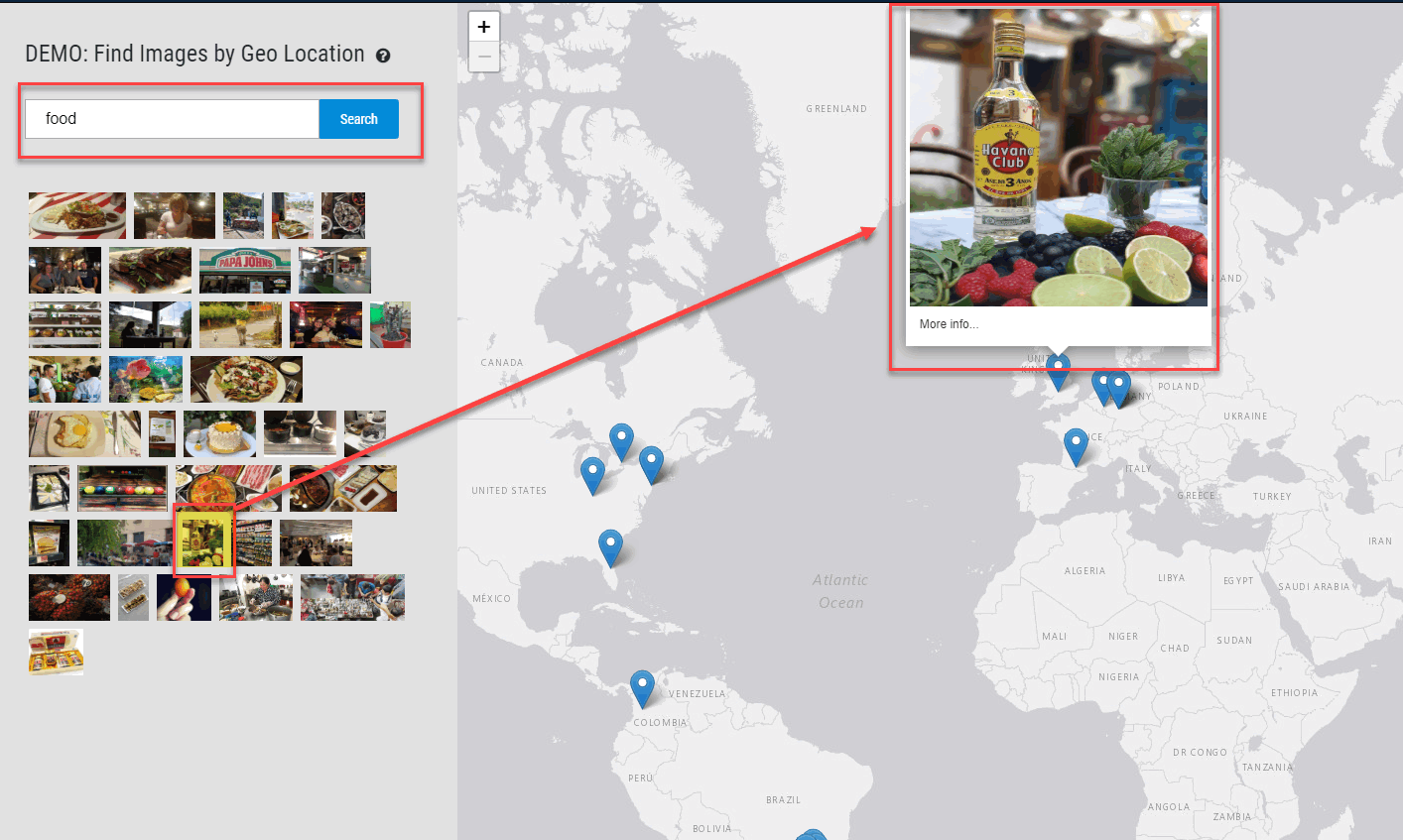

The API can be searched based on real-life objects (such as “cars” or “food”) that Webz.io detects in the images. For instance, if we search for the term “food” we can see results appearing from user-created data generated all over the world. The preview window that appears beneath the search box lets you view some of the results that have been found.

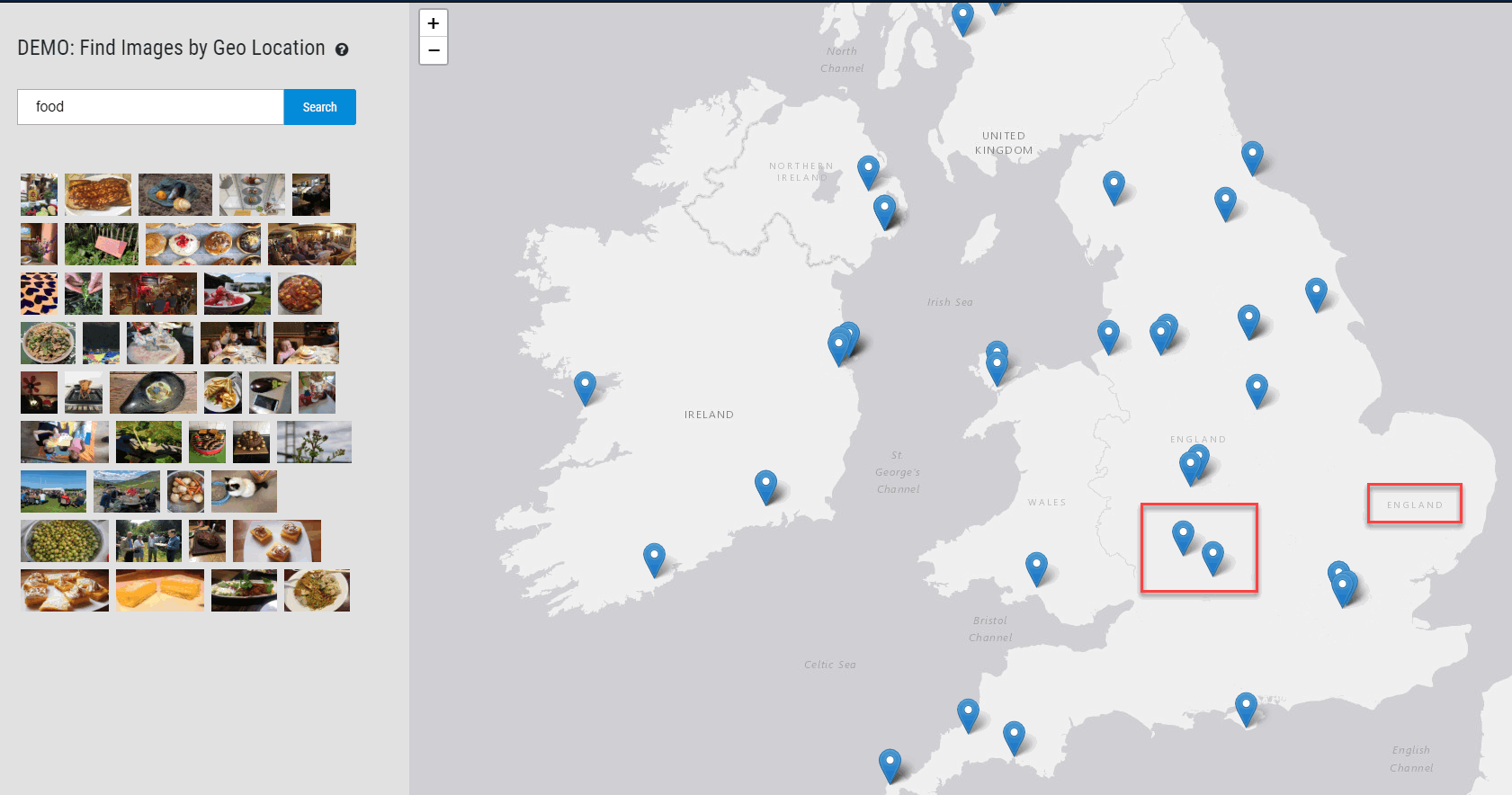

Let’s take a closer look at one of these results. We can see that there were results in the UK.

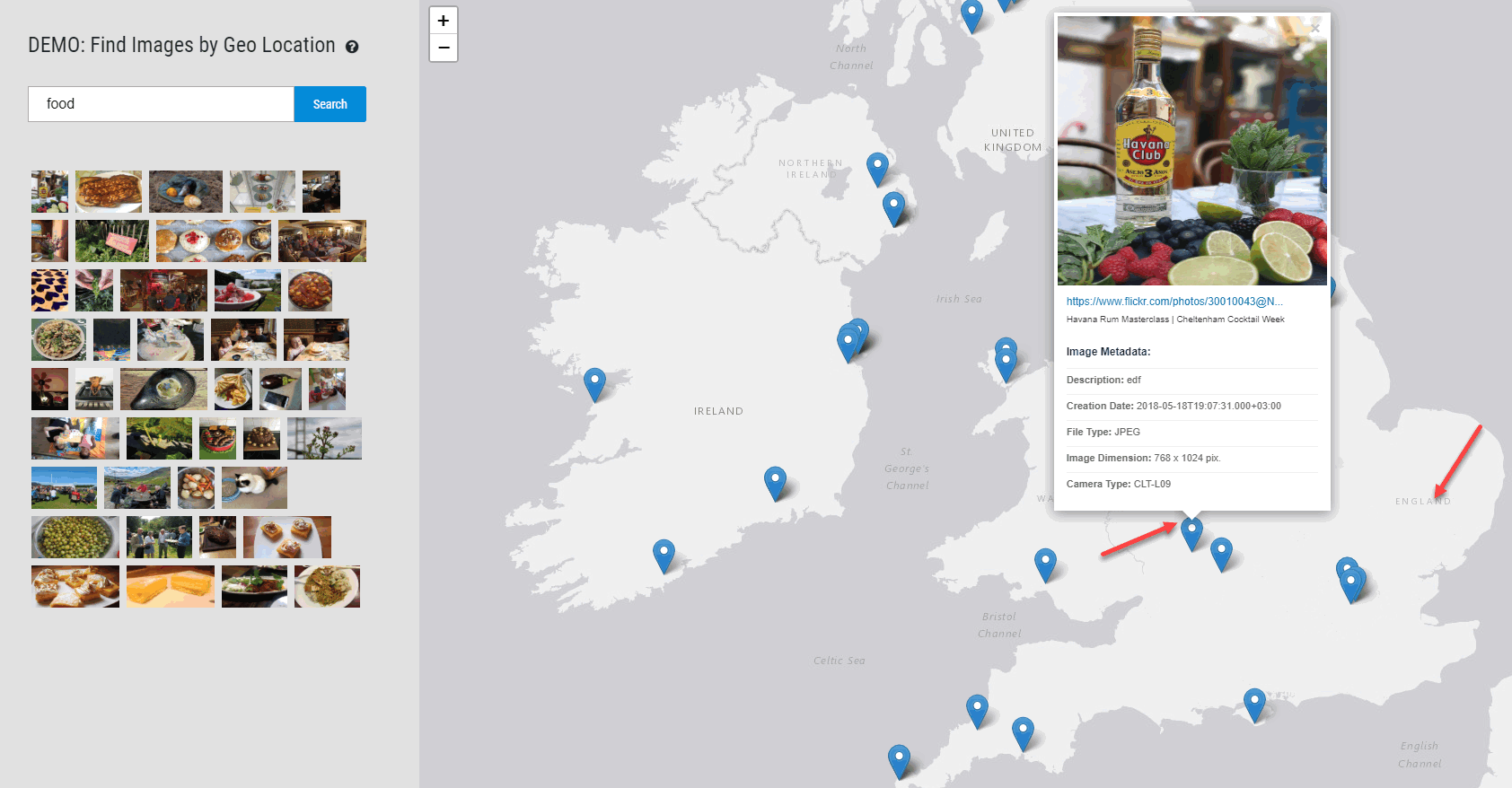

As we zoom closer, we see this result appears to be in England and zooming even further we identify the exact location: Cheltenham, England.

Clicking on the “More Info” button we can see details about the photograph which was posted to Flickr by “Havana Rum Masterclass”.

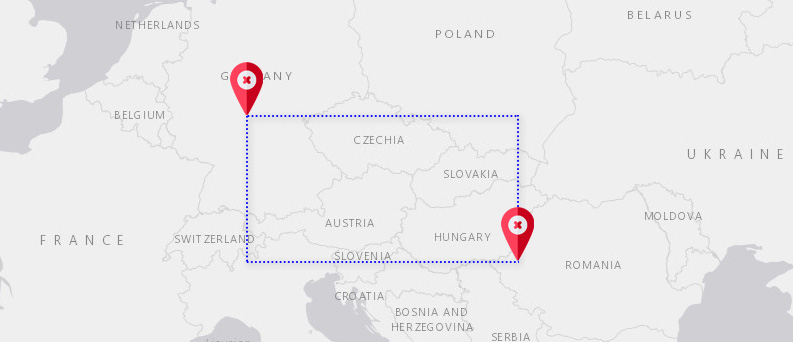

When using the API, users have to include two variables in their query (a latitude and longitude pair in the top left and bottom right corners of a box) in order to create a “geofence” — a rectangular box enclosing a geographical territory.

Once these have been included in the query, any images that were shared within this geographical rectangle will be returned in the results.

Get More Insights When Searching by Location

Searching for images within a specific location can collate valuable information from users that are almost certainly “on the ground” — something that is difficult to achieve when searching text strings.

Interpreted in tandem with textual results, those monitoring public perception, tracking the development of online discussions, or conducting academic research into local conversations can gain a deeper understanding than ever before into the issues they are researching.

Interested in testing the new feature for yourselves? Check out the simulation demo or review our API documentation to assist.